Should Middle Powers Worry about Kill Switch Scenarios for Data Centers?

If a foreign hyperscaler runs a data center, they could technically switch it off at any time. Some think this risk is exaggerated. Reality is more complex for middle powers.

How can middle powers stay relevant in a world where AI becomes transformative, but its development is dominated by the two superpowers US and China? Questions around sovereignty and geopolitical alignment take center stage in recent government initiatives, including the US-led Pax Silica, South Korea’s “AI Squid Game” or the Franco-German Digital Sovereignty summit, and in publications by the White House, RAND, TBI and CSIS, among others.

For various reasons, middle powers would prefer to have their own sovereign AI capabilities. But when it comes to frontier models, they simply don’t have any – and so a “buy local” approach isn’t on the table, at least for the time being. The situation is different for compute.

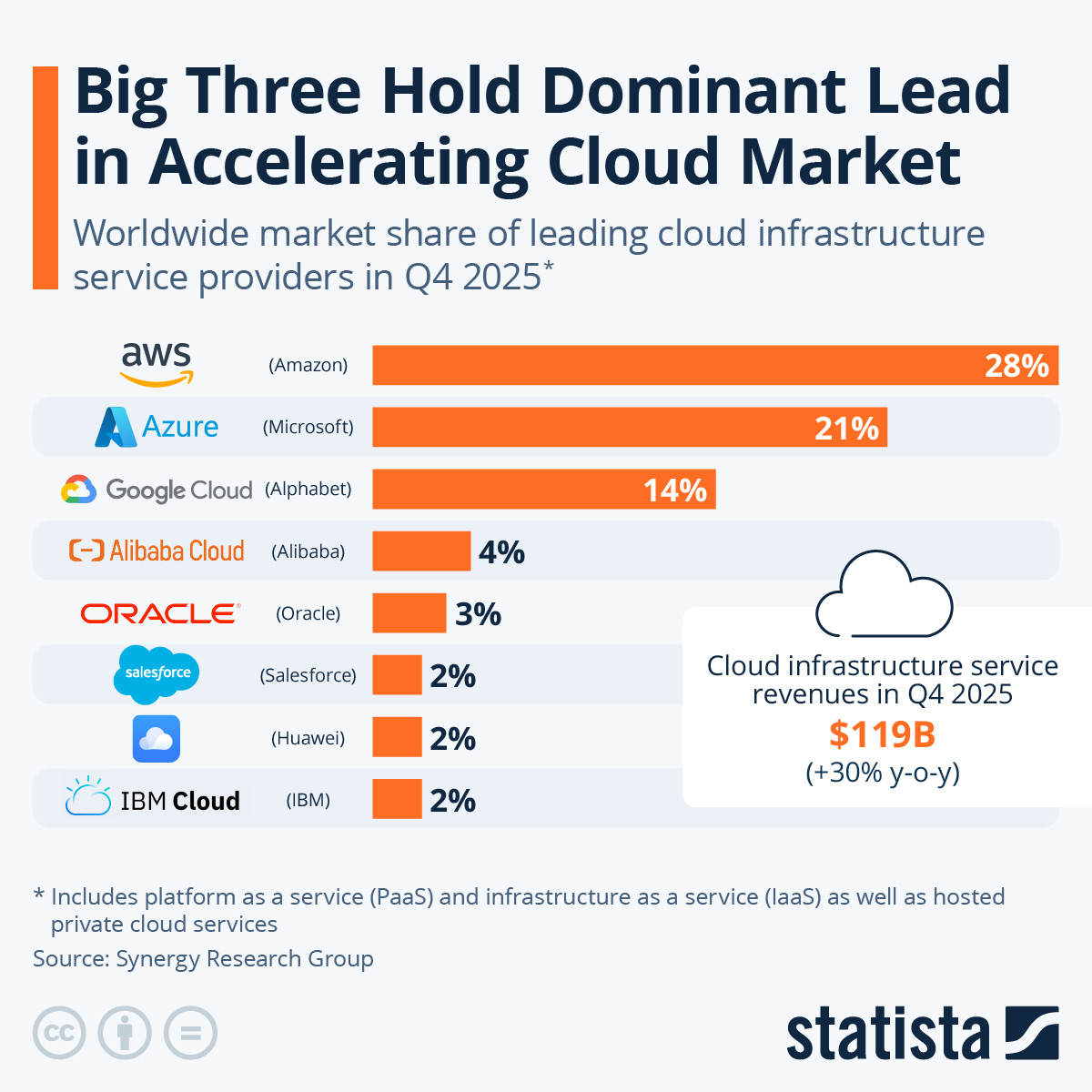

On the one hand, US hyperscalers dominate the global cloud market and middle powers have very little sovereign capacity right now – by which I mean data centers on domestic soil, run by domestic operators on their proprietary or on open-source software. In fact, the Trump Administration’s AI Action Plan, with its focus on a “full stack” export of American AI across hardware and software, explicitly pushes back against middle power sovereignty ambitions.

On the other hand, building sovereign data centers is much easier for middle powers than building their own frontier models: They have domestic cloud companies that could basically start ramping up capacity tomorrow – though at what scale and quality remains uncertain.

Two radical views

I’ll come back to execution later, but let’s start with the strategic question: How much compute sovereignty should middle powers aim for? There are two ‘radical’ answers:

On one view – increasingly popular among experts – they should mostly just let the hyperscalers build. Middle powers’ global share of compute is declining rapidly, and they need a fast solution to prevent falling behind on AI due to unmet compute needs. Hyperscalers like Microsoft Azure, AWS or Google Cloud are simply the world’s most capable actors to provide that solution. Therefore, middle powers should focus on ensuring fast permitting and grid connections, and let the hyperscalers work their magic for all but the most sensitive workloads.

On another view – now quite prevalent in mainstream discourse – this would be a fatal mistake: It incurs a dangerous dependence in geopolitically volatile times, where realpolitik and national interests weigh more heavily than norms-based international cooperation. As a country’s economy and public services increasingly depend on AI, running it on data centers that a foreign actor could technically switch off at will is a severe liability.

The first view sees the “kill switch scenario” as an exaggerated fear. The second view takes this scenario very seriously and sees sovereign compute capacity as a national imperative. However, neither of them is nuanced enough. I’ll argue that the right stance for middle powers is, well, somewhere in the middle.

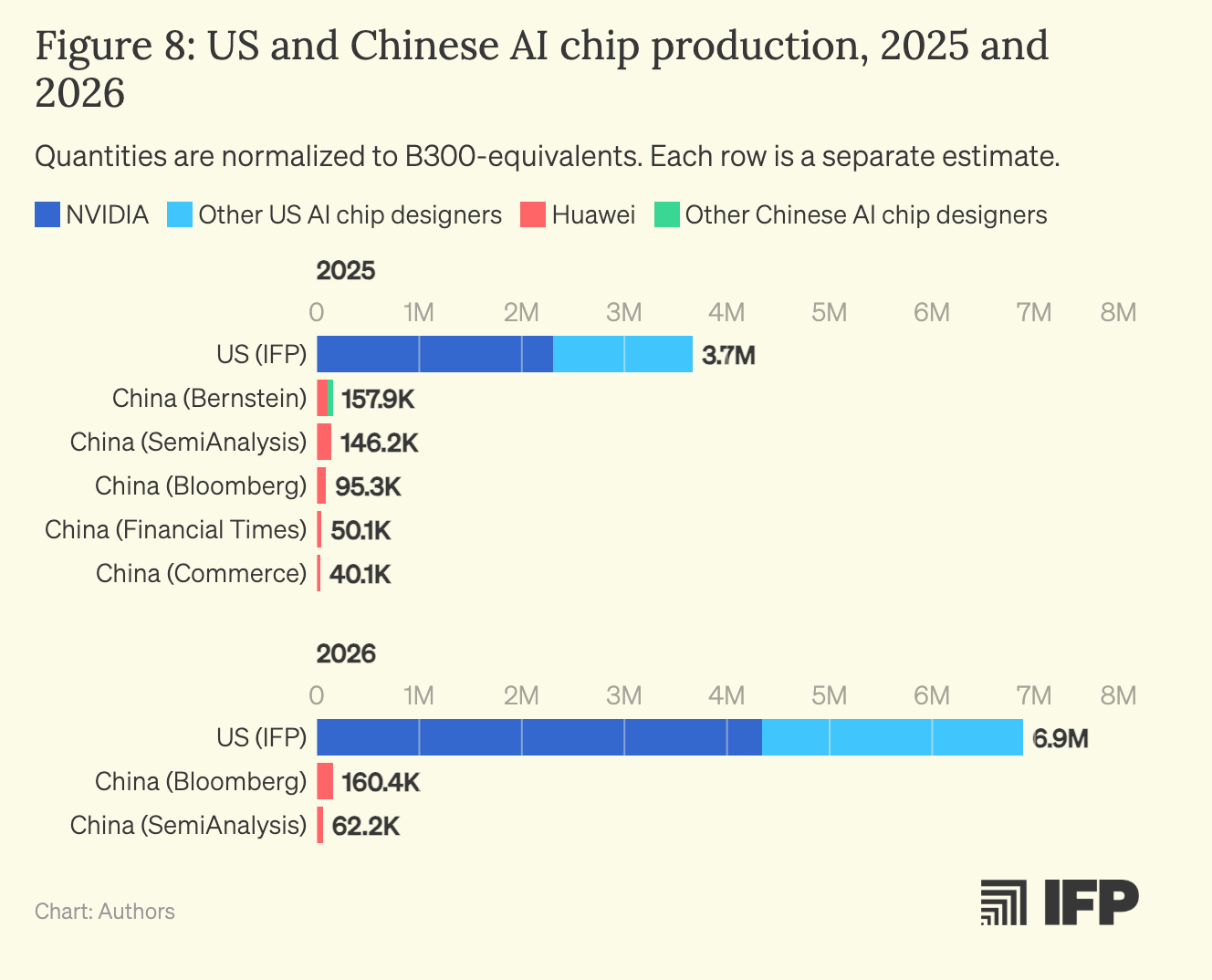

One important preliminary: In general, middle powers seeking access to frontier AI capabilities and infrastructure can align with either of the two AI heavyweights, the US or China. But for large-scale AI compute, only the US is a viable partner at this point. Due to US export controls, China doesn’t have enough compute even for its own domestic needs, and Chinese AI chip manufacturing lags too far behind to support meaningful export programs in the next few years. This is why the following discussion focuses primarily on the US as a potential exporter of AI compute, though similar (and sometimes more pressing) considerations around sovereignty would arise if middle powers imported compute from China.

Complacency is risky

First of all, I don’t think middle powers should be complacent about the kill switch scenario.

Yes, it’s very unlikely that a country would actually switch off allied data centers, doing so would be completely against its companies’ interests, and hyperscalers – very powerful political actors in their own right – would lobby fiercely against it.

Still, there are at least three arguments for taking the kill switch scenario seriously:

First, the scenario is not – as I sometimes hear people suggest – merely an unfounded threat narrative pushed by middle power cloud companies with an interest in protectionism. Quite to the contrary, security experts explicitly discuss the ability to cut off cloud services as a geopolitical lever. For example, a commentary by CNAS/RAND researchers argues that, when it comes to AI compute, the US should follow a “rent, not sell” approach towards China, where it offers cloud services instead of chip exports – the advantage being that for cloud services, “access can be shut off at any stage”. This moves the idea of pulling the plug on cloud compute into the Overton window, even if the discussion here is explicitly in a US-China context.

Second, a data center kill switch confers leverage even if it’s unlikely to ever be triggered. By analogy, it’s also unlikely that North Korea would commit a nuclear attack against another country, and doing so would be completely against its interests. Still, I’d very much prefer them not having that option. The mere fact that they have it makes the difference between a poor rural economy without any leverage and a country that commands attention at the highest levels of international diplomacy. Similarly, the mere fact that a foreign actor could switch off a middle power’s AI data centers – and everything that depends on them, as AI diffuses through society at unprecedented pace – gives it additional leverage.

One might object that the US has more than enough threat potential anyway – e.g. through its military superiority among NATO countries or the fact that even “sovereign” data centers are dependent on US GPUs. A data center kill switch, one might suggest, doesn’t add to that in a meaningful way. Answering this comprehensively would require an assessment of all the different dependencies between the US and various middle powers. Here, I’ll just focus on these two examples, which are particularly relevant.

The US clearly has a geopolitical interest in Europe’s ability to defend itself. I don’t think the current administration seriously calls this into question; what they want is Europe to bear a greater share of the cost. With data centers, it seems different: While the US will care a lot about Europe not being invaded by Russia, it won’t care as much about the reversible economic effects of a compute shortage – and so will be more willing to use this in negotiations.

Leveraging GPUs is much less attractive than leveraging cloud services, because the former comes with a time lag: If the US stops its GPU exports to a country, the effects will mostly be felt after a few years, depending on how fast the chips depreciate. But if a hyperscaler cuts off access to a cloud service, the effects are felt immediately. GPU dependence is thus less of a threat, as middle powers have more time to re-negotiate, which increases their bargaining position.1

Third, I think people tend to underestimate the kill switch scenario because of its adversarial framing: Would a foreign actor really threaten to disrupt vital services in broadly allied countries to coerce them into doing whatever it wants? Let’s assume that this is indeed far-fetched. Still, there is a much more mundane reason to disrupt cloud services offered to another country: In a world where inference compute is scarce (already true) and productivity gains from AI are enormous (not yet true, but we’re getting there), hyperscalers might divert their inference compute back to their home economy – not out of hostile intent, but simply in order to prioritize national needs. From a middle power perspective, sovereign compute is also a hedge against those scenarios.

To conclude: the US switching off overseas data centers might be far-fetched. But as the past months have shown, we’re living in a world where far-fetched things happen. Moreover, there are less adversarial scenarios in which middle powers cannot count on US compute, either. The right response, in my view, is to protect against this by investing in sovereign compute. Yet I think there’s also a way of pushing this too far.

Three levels of sensitivity

Let’s distinguish, by way of example, three levels of sensitivity for AI workloads:

Low sensitivity: the average ChatGPT prompt of a consumer planning their next trip to Venice, or a local car dealer using Claude Cowork to automate invoicing.

Medium sensitivity: industrial use cases involving trade secrets, basic government services, or personal health applications containing private information.

High sensitivity: everything where national security or public safety is at stake, including the use of AI for vital government functions or in critical infrastructure.

One crucial question, to which I hope to return in the future, concerns the relative shares of these workloads in a typical middle power. Here, I’ll focus on a different question: How do sovereignty requirements differ across sensitivity tiers?

For high-sensitivity workloads, middle powers should want fully sovereign data centers: built in their jurisdiction, with a domestic operator using their own (or open-source) software. Where so much is at stake, the arguments above make a compelling case for sovereign solutions. Short of a kill switch, concerns around data leakage and the US CLOUD Act also feed into this.

For low-sensitivity workloads, requiring sovereignty would go too far. Building enough sovereign data centers to meet this demand would be very costly, a cost that wouldn’t be justified by the small risk of disrupted non-vital services. Sovereign clouds are currently unable to compete with hyperscalers either on price or service quality, so forcing customers to rely on them across the board would hamper innovation and growth.

Medium-sensitivity workloads pose the trickiest questions. On the one hand, there are good reasons to restrict them to sovereign clouds: Disruptions in these areas undermine a country’s basic services and economic backbone. Moreover, sector-specific data in industry or medicine are among the few strengths that some middle powers have in AI, and companies will often be reluctant to trust foreign cloud providers with them. On the other hand, the range of use cases is plausibly much broader than for the narrow high-sensitivity workloads, and so building enough sovereign capacity to cover the medium tier is very ambitious. Moreover, disruptions in these areas are costly, but don’t immediately threaten national security.

Before I lay out my favored options for the medium-sensitivity case, I need to take a short detour.

The French model

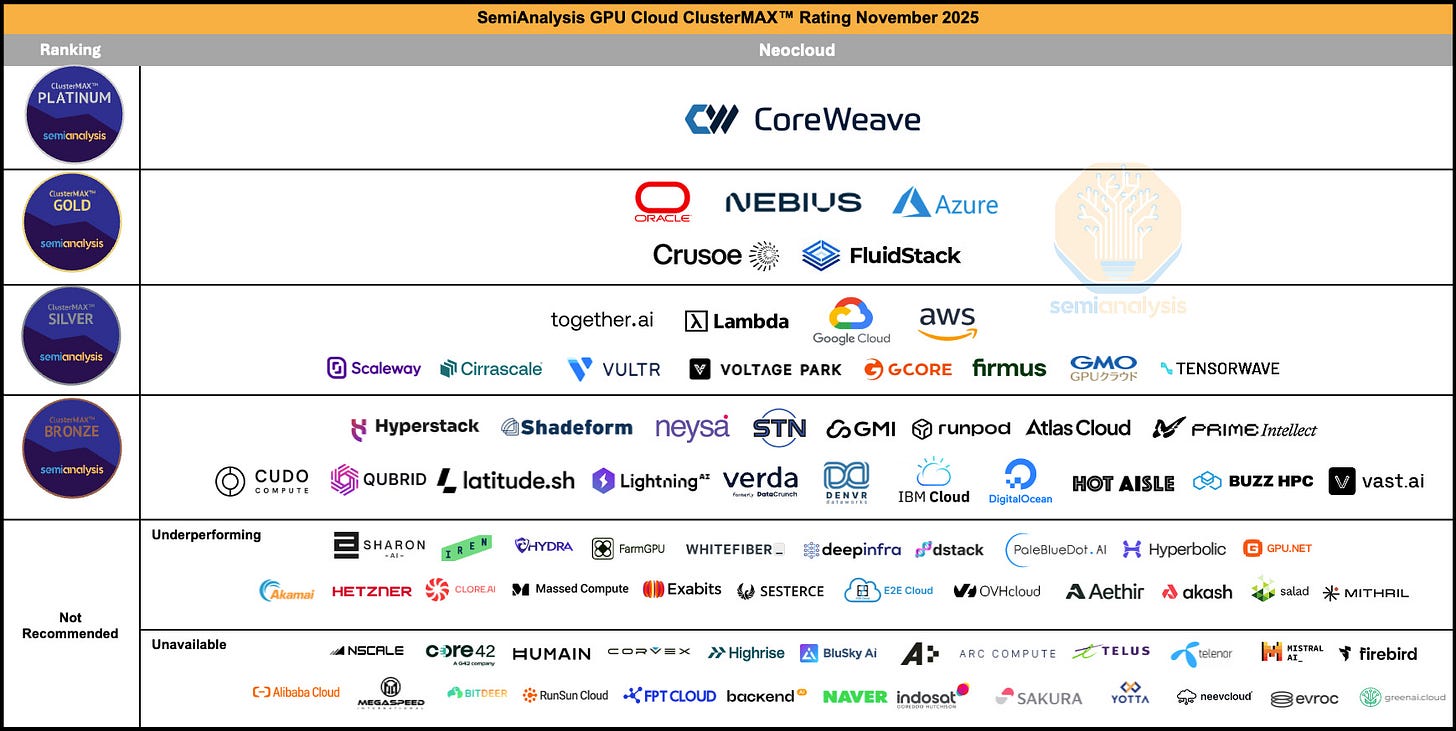

Every middle power that wants at least some sovereign compute faces the following question: Who’s going to build those fully sovereign data centers? The answer is not obvious: In Semianalysis’s ClusterMAX rating, US cloud providers dominate European alternatives across criteria such as pricing, reliability, and (worst of all in this context) security. While notable exceptions exist, such as Fluidstack (UK), Nebius (Netherlands), Scaleway (France) and Gcore (Luxembourg), European providers are far behind, and many companies that play a key role in EU sovereignty discourse, such as Deutsche Telekom or the Schwarz Group, aren’t even mentioned. This seems to create a dilemma: Workloads that are vital to a country’s core functioning will either be sovereign or run on the market’s best possible product, but not both.

France has taken a distinctive approach to avoid this dilemma, which could serve as a remedy for other middle powers. Its “trusted cloud doctrine” mandates that French government agencies and certain private operators may process sensitive data in a cloud service only if it has the SecNumCloud certification, Europe’s most stringent cloud sovereignty standard. To obtain it, a cloud provider must be at least 61% European-owned and immune to extraterritorial laws like the US CLOUD Act.

In practice, this has resulted in a licensing model: For example, since a foreign company like Google cannot achieve the certification on its own, it instead licenses its software code to S3NS, a joint venture between Google and the French company Thales. Thales employees have full control over the code and use it to serve sensitive workloads in France. Since Thales runs the code on its own data centers, Google could not simply shut them down or access the data (unless the software is backdoored, but S3NS employees have access to the source code and can check). The deal is mutually beneficial: Google earns licensing profits, while Thales keeps full legal and operational control.

How much sovereignty do middle powers want?

Let’s come back to medium-sensitivity workloads. In an ideal world, middle powers have their own successful cloud providers, able to competitively serve these workloads with their own proprietary (or open-source) solutions. In the absence of such companies, middle powers will have to rely at least partly on foreign providers. There are two options for still getting decent amounts of sovereignty out of this.

The first option is to adopt an extended version of the French model, requiring hyperscalers to share their source code with national champions to serve workloads of both high and medium sensitivity. Carving out these use cases in a clean way would be legally tricky, but is probably doable. On the one hand, adopting this model would be somewhat escalatory vis-à-vis the US, and they could challenge it through legal or other means. On the other hand, middle powers could point to France as a successful precedent, and sweeten the deal with favorable conditions for hyperscalers in other domains.

If middle powers don’t want to go that far, the second option is to rely on the hyperscalers’ own sovereign cloud solutions, which are tailored to middle power needs. Here, hyperscalers would build data centers in a middle power and commit to only processing data on domestic soil, with customer-managed encryption keys, and sometimes operated by a separate EU corporate entity (in the case of European host countries). If the US government forced the hyperscaler to shut down such a data center, a contractual continuity clause is meant to enable a domestic operator to take over its operations: For example, Microsoft stores a copy of the data center’s software stack on a secure server in Switzerland, which the domestic operator would then have the right to access. One worry about this model is that since a US company remains the service provider, the CLOUD Act still bites. The other disadvantage is that during a crisis, the domestic provider would have to take over the operations from scratch, which creates more friction than if it had been the main operator all along.

That being said, both solutions largely dissipate kill switch worries: In case of disruption, a domestic company will be there to continue the operations. One remaining problem is that the domestic operator would now be cut off from security updates. This still seems better than an overnight shutdown, and the threat might become more manageable in a world of increasingly powerful coding agents with defensive cyber capabilities (though AI’s impact on the cyber offense-defense balance remains uncertain). Moreover, both solutions solve a key problem: They provide customers with relatively high levels of sovereignty without forcing them into sub-par domestic cloud solutions. Countries will have to decide whether the extra protection from the French model is worth the diplomatic hiccups.

Bottom line

Middle powers shouldn’t be complacent about kill switch scenarios. The mere option to shut down essential services gives foreign actors a lot of leverage, and restrictions might be necessary even without hostile intent. However, not every use case needs a sovereign solution. For many applications, hyperscalers still offer the best solution and countries should generally welcome their investment. The best hedge against kill switch scenarios is to clearly distinguish between different sensitivity tiers and prepare accordingly.

This might be different in a world where GPUs themselves come with a remote kill switch. As far as we know, we’re not living in that world (yet?), so I’ll set that scenario aside.